Hi

I get a model warning pointing to Line 209 of the init.py file in the AddaxAI files/env whenever I run AddaxAI. This also causes a long startup time of the model, occassionally timing out AddaxAI and has caused it to crash and (I think) not full utilisation of the GPU’s capability for running analysis.

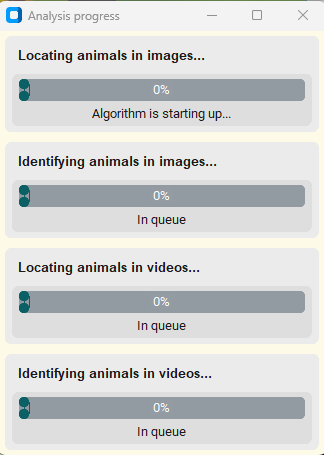

Typically it does end up running and completing, but with a long delay at the ‘starting algorithm’ stage.

This line relates to CUDA compatibility with the PyTorch version installed which I assume means Addax doesn’t recognise my GPU as compatible my GPU etc.

The GPU is an RTX 5090 with nividia-smi & driver at 581.29 and a CUDA version of 13.

Could AddaxAI be throwing the error because the 5090 is too new and/or not on the compatible list that its PyTorch version has? Or, might Addax be having another issue that also kicks up this error?

Operating at the real edge of my knowledge around CUDA, PyTorch and things I might have assumed about AddaxAI and its PyTorch environment.

Anyone else had a similar issue?

Loving Addax and all that it’s helping with, but just keep hitting this snag.

Cheers